What is GTS?

We built GTS to be simple and friendly to game engine task system use cases. We want a framework that allows the game development community to experiment with and learn from different scheduling algorithms easily. We also want a framework that allows us to demonstrate state-of-the-art algorithms on task scheduling. Finally, we want to encourage games to better use thread parallelism and task parallelism so they can compute more cool stuff and enable richer PC gaming experiences!

Natural Engine Integration

GTS is built around the Bring Your Own Engine (BYOE) or framework model, enabling an engine to hook or override all low-level platforms, containers, and debugging implementations for tight integration. These are implementations that need to be provided when porting GTS to a new platform.

Multicore Scaling

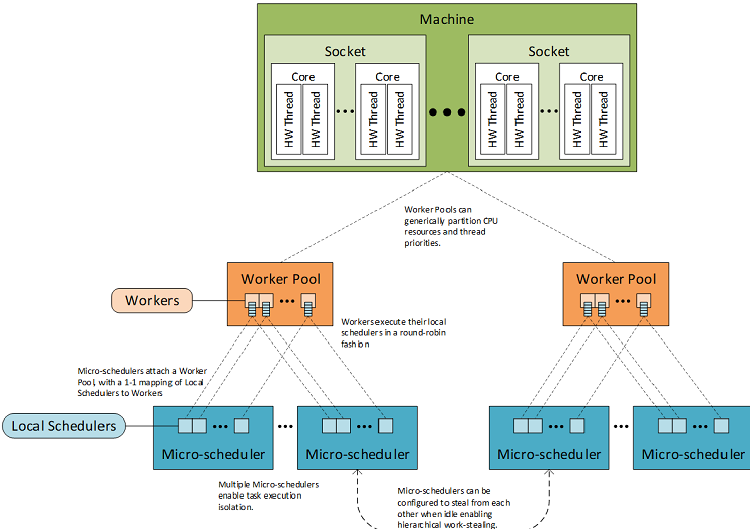

GTS provides a work stealing microscheduler for localized, fine-grained task decompositions. Work stealing automatically load balances across available workers, has minimal inter-thread communication, and has asymptotically proven bounds1, all lending it the ability to perform at scale. Further, GTS employs a novel back off strategy that gets idle worker threads out of the way of other threads (driver, middleware, and so on), to ensure that all threads are contributing fairly.

Complexity Management

GTS contains two higher-level abstractions: parallel patterns and a macro scheduler. Parallel patterns abstract the complexity of the microscheduler into composable algorithms like parallel-for, parallel-reduce, and so on. The macro scheduler organizes chunks of microscheduler and parallel patterns work into a task graph (directed acyclic graph), which defines a happens-before relationship for the entire system. This task graph is scheduled by the macro scheduler so that the nodes of the graph are executed in an optimal order across all compute devices.

Figure 1. GTS Microschedule.

Tuning

GTS provides two major tuning points.

- Back Off Tuning: GTS abstracts all back off strategies for spinning, locking, and thread suspension so that the system can be tuned to each unique workload.

- Scheduler Customization: While the GTS microscheduler’s primary mode of scheduling is work-stealing, developers can also use work-sharing and work streaming for fine-grained tasks. Further, the GTS macro scheduler implementation can be customized through a set of interfaces to extend the provided weakly dynamic2 scheduler.

What is Not GTS?

GTS is not a product. It is a sandbox where the game development community can experiment with various methods of scheduling work onto an ever-evolving hardware landscape. We encourage developers to fork, integrate, modify and, hopefully, contribute code or write about successful alterations. We expect all code releases to be stable, tested, and versioned. The implementation will evolve over time, possibly with interface-breaking changes.

Where to Get GTS

The GTS repo is located on GitHub*. Examples and tutorials are maintained in the source to keep things up to date. For further instructions, see the readme. If you have any comments about how we can make the material more accessible, please let us know through GitHub comments.