This article describes how to configure a rate limiting instance on ingress traffic for a Data Plane Development Kit (DPDK) interface on Open vSwitch* (OvS) with DPDK. This article was written with network admin users in mind who want to use Open vSwitch Rate Limiting to guarantee reception rate performance for DPDK port types in their Open vSwitch server deployments. This article is a companion to the QoS Configuration and Usage for Open vSwitch* with DPDK article as rate limiting and QoS execute on RX/TX paths respectively but use unique API utility commands for setup and usage.

Note: At the time of writing, rate limiting for OvS with DPDK is only available on the OvS master branch. Users can download the OvS master branch as a zip here. Installation steps for OvS with DPDK are available here.

Rate Limiting in OvS with DPDK

Before we configure rate limiting, let’s first discuss how it is different from QoS and how it interacts with traffic in the vSwitch.

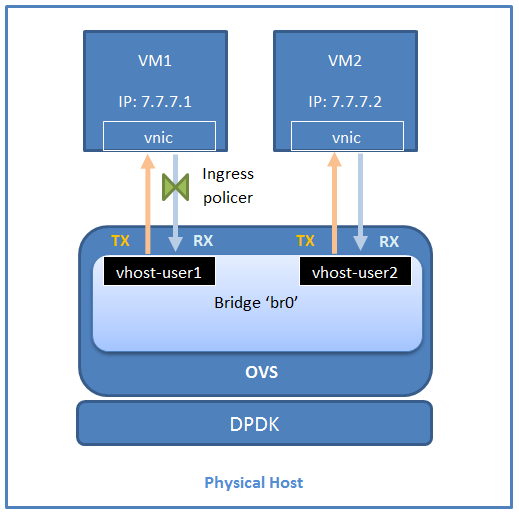

Whereas QoS executes on traffic that is egress or transmitted from a port on OvS, rate limiting is configured in OvS with DPDK to operate only on ingress traffic received on the port on the vSwitch. Rate limiting is implemented in OvS with DPDK using an ingress policer (similar to the egress policer QoS type supported by OvS with DPDK). An ingress policer simply drops packets once a certain reception rate is surpassed on the interface (a token bucket implementation). For a physical device, the ingress policer will drop traffic that is received via a NIC from outside the host. For a virtual interface, that is, a DPDK vhost-user port, it will drop traffic that is transmitted from the guest to the vSwitch, in effect limiting the transmission rate of the traffic for the guest on that port. Figure 1 provides an illustration of this.

Figure 1: Rate limiting via an ingress policer configured for a vhost-user port.

Test Environment

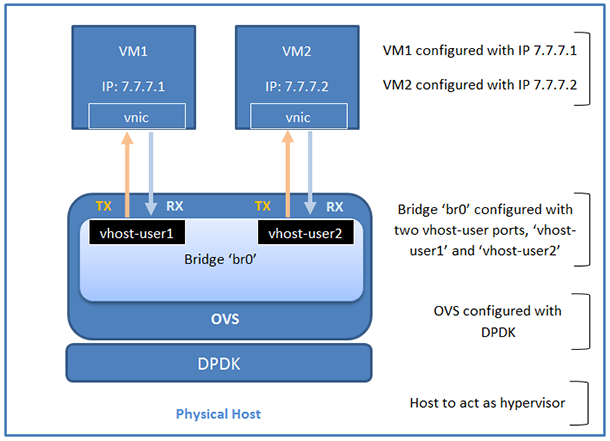

Figure 2: Test environment.

Note: Both the host and the virtual machines (VMs) used in this setup run Fedora* 23 Server 64-bit with Linux* kernel 4.4.6. Each VM has a virtual NIC that is connected to the vSwitch bridge via a DPDK vhost-user interface. The vnic appears as a Linux kernel device (for example, "ens0") in the VM OS. Ensure there is connectivity between the VMs (for example, ping VM2 from VM1).

Rate Limiting Configuration and Testing

To test the configuration, make sure iPerf* is installed on both VMs. Users should ensure that the rpm version is matched to the OS guest version; in this case the Fedora 64-bit rpm should be used. If using a package manager such as ‘dnf’ on Fedora 23, the user can install iPerf automatically with the following command:

dnf install iperf

To test the configuration, make sure that iPerf is installed on both VMs. iPerf can be run in a client mode or server mode. In this example, we will run the iPerf client on VM1 and the iPerf server on VM2.

Test Case without Rate Limiting Configured

From VM2, run the following to deploy an iPerf server in UDP mode on port 8080:

iperf –s –u –p 8080

From VM1, run the following to deploy an iPerf client in UDP mode on port 8080 with a transmission bandwidth of 100 Mbps:

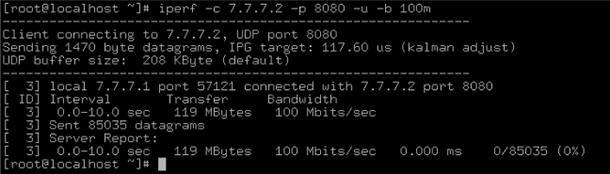

iperf -c 7.7.7.2 -u -p 8080 -b 100m

This will cause VM1 to attempt to transmit UDP traffic at a rate of 100 Mbps to VM2. After 10 seconds, this will output a series of values. Run these commands before rate limiting is configured, and you will see results similar to Figure 3. We are interested in the Bandwidth column in the server report.

Figure 3: Output without rate limiting via ingress policing configured.

The figures shown in Figure 3 indicate that a bandwidth of 100 Mbps was attained between the VMs.

Test Case with Rate Limiting Ingress Policer Configured

Now we’ll configure rate limiting using an ingress policer on vhost-user1 to police traffic at a rate of 10 Mbps with the following command:

ovs-vsctl set interface vhost-user1 ingress_policing_rate=10000

ingress_policing_burst=1000

The relevant parameters are explained below:

- ingress_policing_rate: The maximum rate (in Kbps) that this VM should be allowed to send. For ingress policer creation, this value is mandatory. If no value is specified, the existing rate limiting configuration will not be modified.

- ingress_policing_burst: Measured in kb and represents a token bucket. At a minimum this parameter should be set to the expected largest size packet. If no value is specified, the default is 8000 kb.

Repeating the iPerf UDP bandwidth test now, we see something similar to Figure 4.

Figure 4: Output with rate limiting via ingress policing configured.

Note the attainable bandwidth with ratl Limiting configured is now 9.59 Mbps rather than 100 Mbps. iPerf has sent UDP traffic at the 100 Mbps bandwidth from its client on VM1 to its server on VM2, however the traffic has been policed on the vSwitch vhost-user1 ports reception path via rate limiting using an ingress policer. This has limited the traffic transmitted from the iPerf client on VM1 to ~10 Mbps.

It should be noted that if using TCP traffic, the ingress_policing_burst parameter should be set at a sizable fraction of the ingress_policing_rate; a general rule of thumb is > 10%. This is because TCP interacts poorly when packets are dropped, which causes issues with packet retransmission.

The current rate limiting configuration for vhost-user1 can be examined with:

ovs-vsctl list interface vhost-user1

To remove the rate limiting configuration from vhost-user1, simply set the ingress policing rate to 0 as follows (you do not need to set the burst size):

ovs-vsctl set interface vhost-user1 ingress_policing_rate=0

Conclusion

In this article, we have shown a simple use case where traffic is transmitted between 2 VMs over Open vSwitch* with DPDK configured with rate limiting via an ingress policer. We have demonstrated utility commands to configure rate limiting on a given DPDK port, how to examine current rate limiting configuration details, and finally how to clear a rate limiting configuration from a port.

Additional Information

For more details on rate limiting usage, parameters, and more, refer to the rate limiting sections detailed in the vswitch.xml and ovs-vswitchd.conf.db.

Have a question? Feel free to follow up with the query on the Open vSwitch discussion mailing thread.

To learn more about Open vSwitch* with DPDK, check out the following videos and articles on Intel® Developer Zone and Intel® Network Builders University.

QoS Configuration and usage for Open vSwitch* with DPDK

Open vSwitch* with DPDK Architectural Deep Dive

DPDK Open vSwitch*: Accelerating the Path to the Guest

About the Author

Ian Stokes is a network software engineer with Intel. His work is primarily focused on accelerated software switching solutions in user space running on Intel® architecture. His contributions to Open vSwitch* with DPDK include the OvS DPDK QoS API and egress/ingress policer solutions.