-

Developers

-

SDK 2.0

Get the latest Intel RealSense SDK 2.0 to kick-off your project.

-

Documentation

Make the most out of your Intel RealSense devices with extensive documentation and whitepapers.

-

Code samples

Get started fast with depth development.

-

Videos and tutorials

Check out tutorials and camera tuning hints.

-

Webinars and events

View our library of webinars from computer vision experts and sign up for the next one.

-

GitHub

Our main code repository where you can find latest releases and source code.

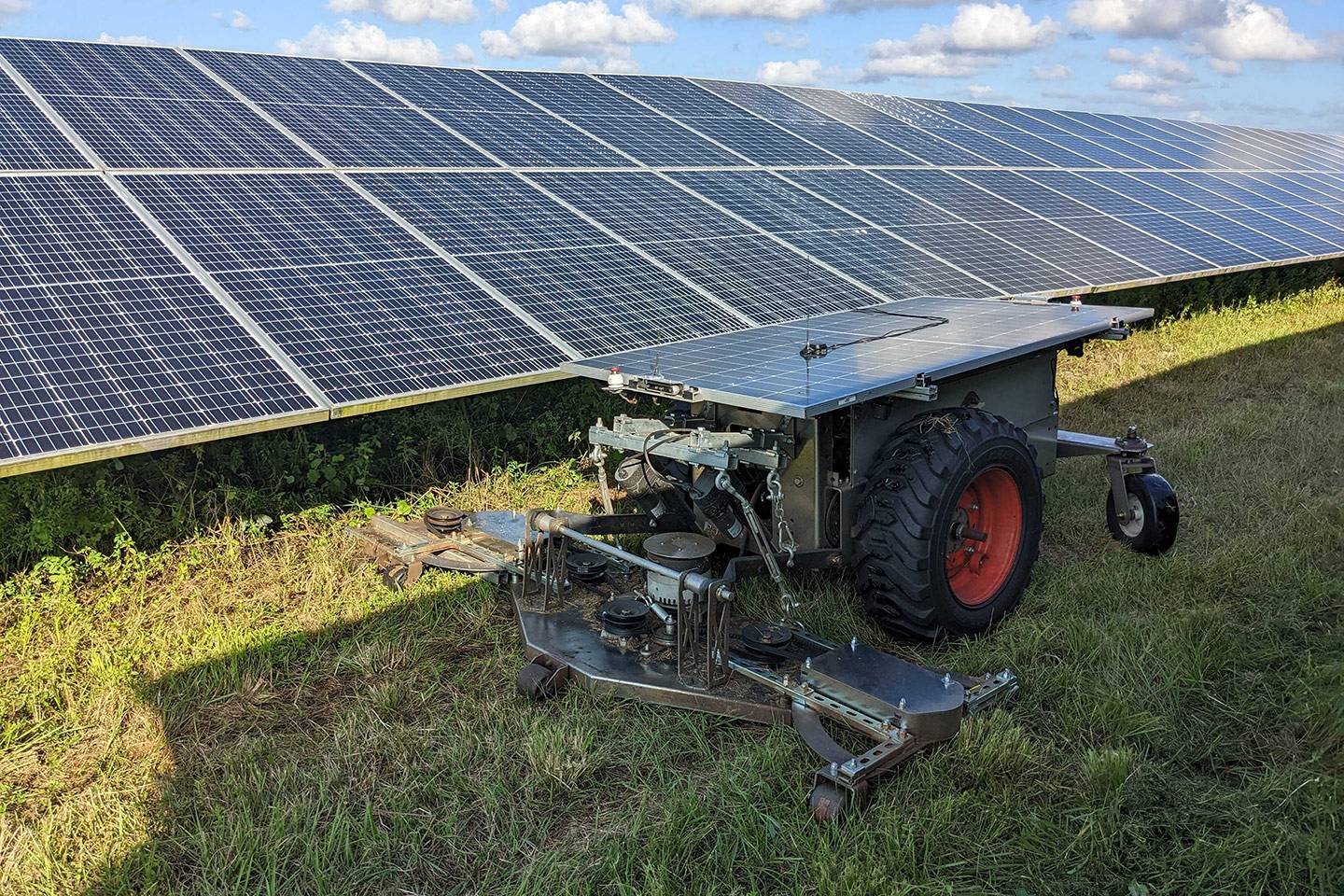

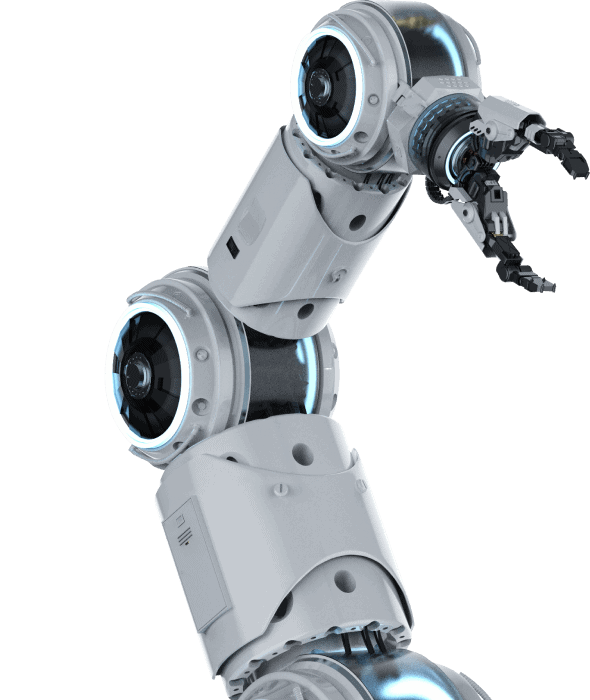

Computer vision

in robotics comes into focus.

In the last few years demand for computer vision technology that reliably works and performs across a variety of conditions has grown rapidly. Learn more about how robots see and understand the world.

-

Where to Buy

Get the latest Intel RealSense products now.

-

Buy online

Buy directly from Intel RealSense. Fast worldwide shipping.

-

Authorized distributor

Find an authorized distributor near you.

-

Talk to sales

Let our sales team help you with the purchase

Stay Updated

Get the latest Intel RealSense computer vision news, product updates, events and webinars notifications and more.

By submitting this form, you are confirming you are an adult 18 years or older and you agree to Intel contacting you with marketing-related emails or by telephone. You may unsubscribe at any time. Intel’s web sites and communications are subject to our Privacy Notice and Terms of Use.